I haven't written a single line of code in months

In January 2026, Boris Cherny, the creator of Claude Code at Anthropic, stated that 100% of the code he produces is now written by AI. Not 50%, not 80%. 100%. He hasn’t written a single line of code in two months. Mike Krieger, Anthropic’s CPO, confirms: Claude writes Claude. 70 to 90% of the code across the entire company.

When I read that, I wasn’t surprised. Because on my end, I haven’t written a single line of code in months. And yet, I’m working on a complex project in finance, not some weekend side project.

This first article in a series about my journey with AI is a starting point. Not a manifesto, not a guide. Just the observation of a senior PHP developer with over 20 years of experience who has watched his profession transform in a matter of months.

ChatGPT: the amazement, then the disappointment

When ChatGPT came out, I did what everyone did: I tested it. I asked it to generate a Symfony command for importing users. The result blew me away. Clean structure, dependency injection, error handling. In a few seconds, I had code that would have taken me a good half hour to write.

Except the magic stopped right there.

As soon as the context became slightly real, with my entities, my services, my project conventions, the model fell apart. Makes sense: it couldn’t read the entire project. It generated generic code, sometimes good, often off the mark. Everything needed adapting, verifying, sometimes rewriting entirely. In the end, the time saved was marginal. An impressive gimmick, but a gimmick nonetheless.

Copilot: the wow effect, then the stagnation

Then GitHub Copilot arrived in my IDE. The first autocompletions gave me a real wow moment. It anticipated what I wanted to write, suggested entire blocks that were spot on. You felt augmented.

But once the initial amazement wore off, the limits showed up fast. The model invented methods that didn’t exist in my classes. It suggested calls to services with fictional signatures. It had zero global vision of the project. It completed what it could see in the current file, period.

We’ve all been there: accepting a Copilot suggestion, running the tests, watching everything blow up, realizing the suggested method doesn’t exist anywhere. Fix it. Start over. Over time, the net gain became debatable.

We plateaued there for a good while. AI in development was handy for boilerplate, regex, documentation. But for real engineering work on a real project? Not yet.

Claude Code: the turning point

And then Claude Code came along. I discovered it in an unlikely context: a small Jeedom project where I had to implement a completely undocumented feature. I had no idea how the system worked internally. Roughly estimated, it would have taken me a full day just to understand the mechanics before even starting to code.

I decided to delegate the task to Claude Code. And there, for the first time, I saw something fundamentally different.

In real time, I watched it browse through the project files. It read the source code, identified relevant classes, launched PHP scripts to observe outputs. It was feeling its way through, exactly like a human would when faced with poorly structured, undocumented code. It tested hypotheses, adjusted its approach, backtracked when a lead went nowhere.

Thirty minutes later, the first version was functional.

A few iterations later, I had a testable version to deliver to my client. Two hours of total work, versus at least a full day if I had been on my own.

This was no longer simple intelligent copy-paste. It was an agent that reasoned about the code, understood context, and had an investigative approach. The difference with everything I’d used before was radical.

Today: a partner, not a tool

Since that day, I’ve pushed the boundaries week after week. The models have evolved, capabilities have exploded. I’m no longer afraid to entrust very complex tasks to Claude.

It has become a real development partner. I use it to challenge my architectural ideas. We validate implementation steps together. It designs solutions in minutes that would have taken me several hours. And from there, we can iterate quickly, or pivot completely to another approach with the result in front of us much faster.

The outcome isn’t just that I code faster. It’s mainly that I arrive at a much higher quality solution for the same time spent. Where before I would have stopped at “the first version that works” due to time constraints, today I can explore multiple architectures, compare approaches, and choose the best one. The time freed up by AI, I reinvest in thinking.

What’s coming in this series

This article is the first in a series where I’ll share concretely what I’ve put in place. My tools, my methods, my habits with Claude Code.

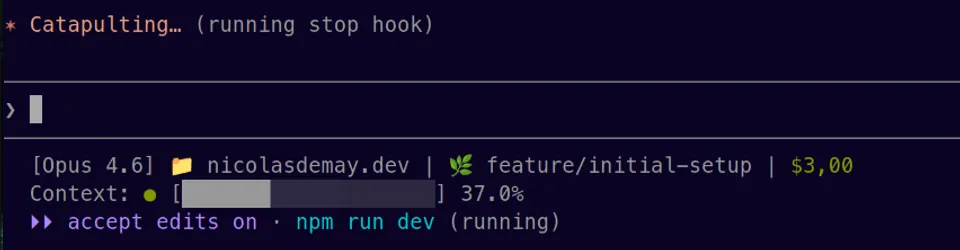

I’ll also comment on new developments as they come, because this field evolves at a staggering pace. Claude Code literally releases one or two updates every single day. Some are minor, others completely change the game. What works today might be obsolete tomorrow. A workflow I’ve refined over weeks can become useless after a single update.

That’s exactly why I’m documenting this journey: to keep track of what works at a given point in time, and to see the evolution in hindsight.

Disclaimer

I’m not positioning myself as an AI expert. I’m a senior PHP developer who uses these tools daily on real projects, and shares what he observes. My experience is not universal.

Everyone needs to build their own experience. Test, fail, adjust. What works for me on my Symfony projects might not work the same way for you. The important thing is to start, to experiment, and not to stay on the sidelines.

Because if Boris Cherny is right, and I think he is, the question is no longer whether AI will transform our profession. It’s how we adapt.

And that’s what we’ll be talking about in the upcoming articles.